The UConn Library and the School of Engineering are working to develop new technology that applies machine learning to handwriting text recognition that will allow researchers to have improved access to handwritten historic documents.

Handwritten documents are essential for researchers, but are often inaccessible because they are unable to be searched even after they are digitized. The Connecticut Digital Archive, a project of the UConn Library, is working to change that with a $24,277 grant awarded through the Catalyst Fund of LYRASIS, a nonprofit organization that supports access to academic, scientific, and cultural heritage.

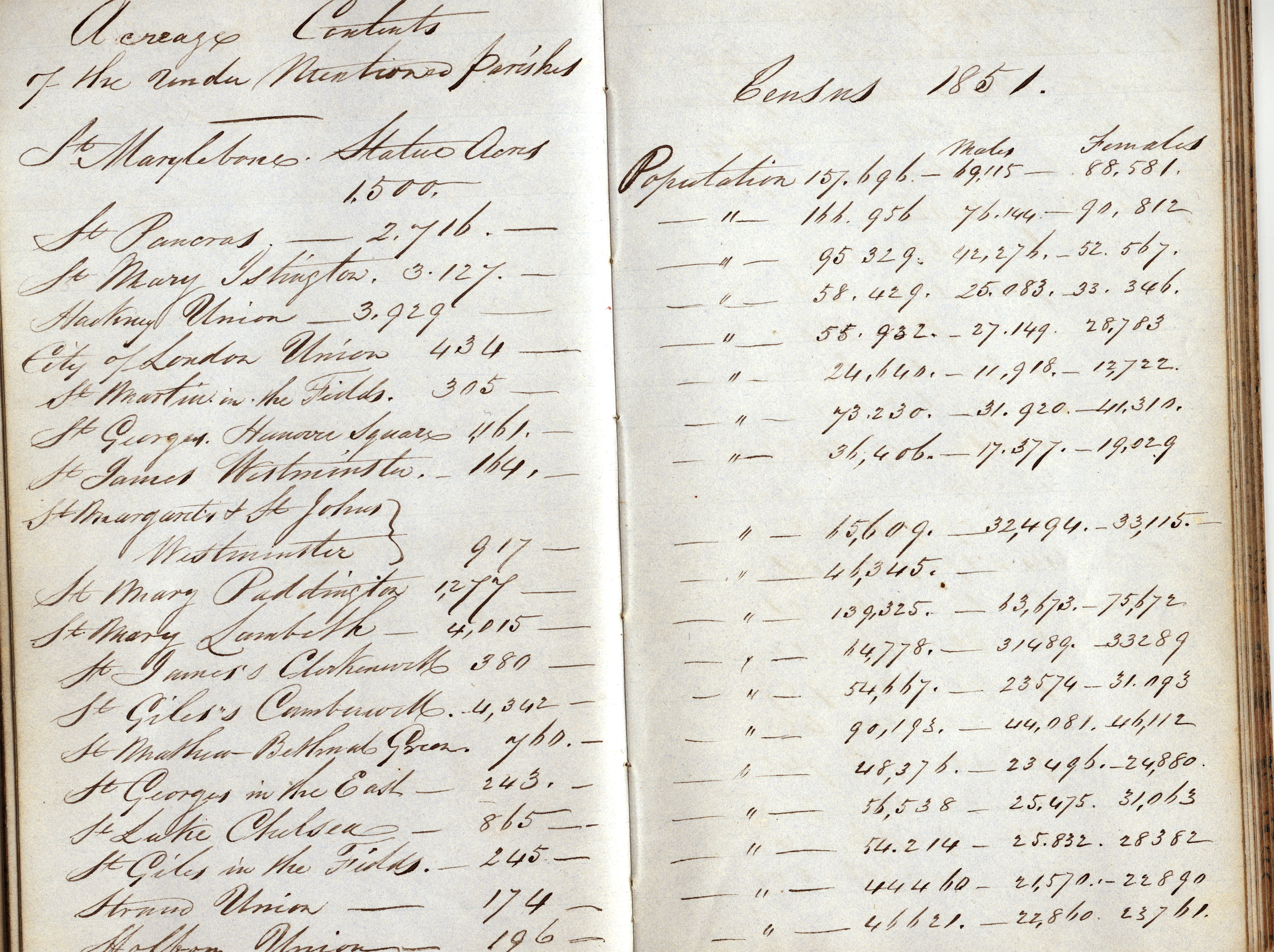

Archives and special collections from across Connecticut fill the Connecticut Digital Archive (CTDA), providing online access to a treasure of historic materials. However, the irregularity in the handwriting in many of the manuscripts leaves the historical information in these documents inaccessible to Optical Character Recognition (OCR), a transfer method used for more than 20 years to assist in document discoverability.

To address this, historians and computer scientists have worked to apply machine learning to handwriting text recognition (HTR) using a relatively small number of projects with varied techniques and varied success. In the summer of 2019, the Library, in partnership with the Massachusetts Historical Society, Greenhouse Studios, and UConn School of Engineering, created a set of over 16,000 images of 22 different characters from the John Quincy Adams Papers.

Characters in the Adams Papers were used to create a set of algorithms designed to recognize patterns in those images. The algorithms were modeled to form a neural network that takes the handwritten digits, known as training examples, and develops a system to learn from them, similar to the human brain. As the examples are increased, the network learns more and improves its accuracy in identifying the individual letters and words.

The Adams Papers pilot project produced promising results, with an accuracy rate of more than 86% when tested on all 22 characters and an amazing 96%+ accuracy rate when testing on four of the characters.

“Historical manuscripts are essential for humanities research and these funds will help scholars engage with unique and distinctive collections in a way they couldn’t before,” says Greg Colati, assistant University librarian for University Archives, Special Collections & Digital Curation.

The grant funds from LYRASIS will allow the Library and the Computing, Computer Science & Engineering Department in the School of Engineering to expand this work on additional volumes of handwritten documents in the John Adams Papers. The goal is to expand the datasets, adjust the neural networks, and release the updated version to the public.

LYRASIS is a non-profit organization whose mission is to support enduring access to the world’s shared academic, scientific and cultural heritage through leadership in open technologies, content services, digital solutions and collaboration with archives, libraries, museums and knowledge communities worldwide. The grant is part of their Catalyst Fund which provides support for new ideas and innovative projects that explore, test, refine and collaborate on innovations with community-wide impact.

The CTDA is a service of the UConn Library, providing services to preserve and make available digital assets related to Connecticut and created by Connecticut-based, not-for-profit educational, cultural, and historical institutions, including libraries, archives, galleries, and museums.