It is hard to get people excited about software, says Eliza Grames, a doctoral candidate in ecology and evolutionary biology. Yet, the software she has developed is exciting for anyone about to embark on a new research and trying to determine whether it’s actually … new.

Put yourself into the shoes of a researcher.

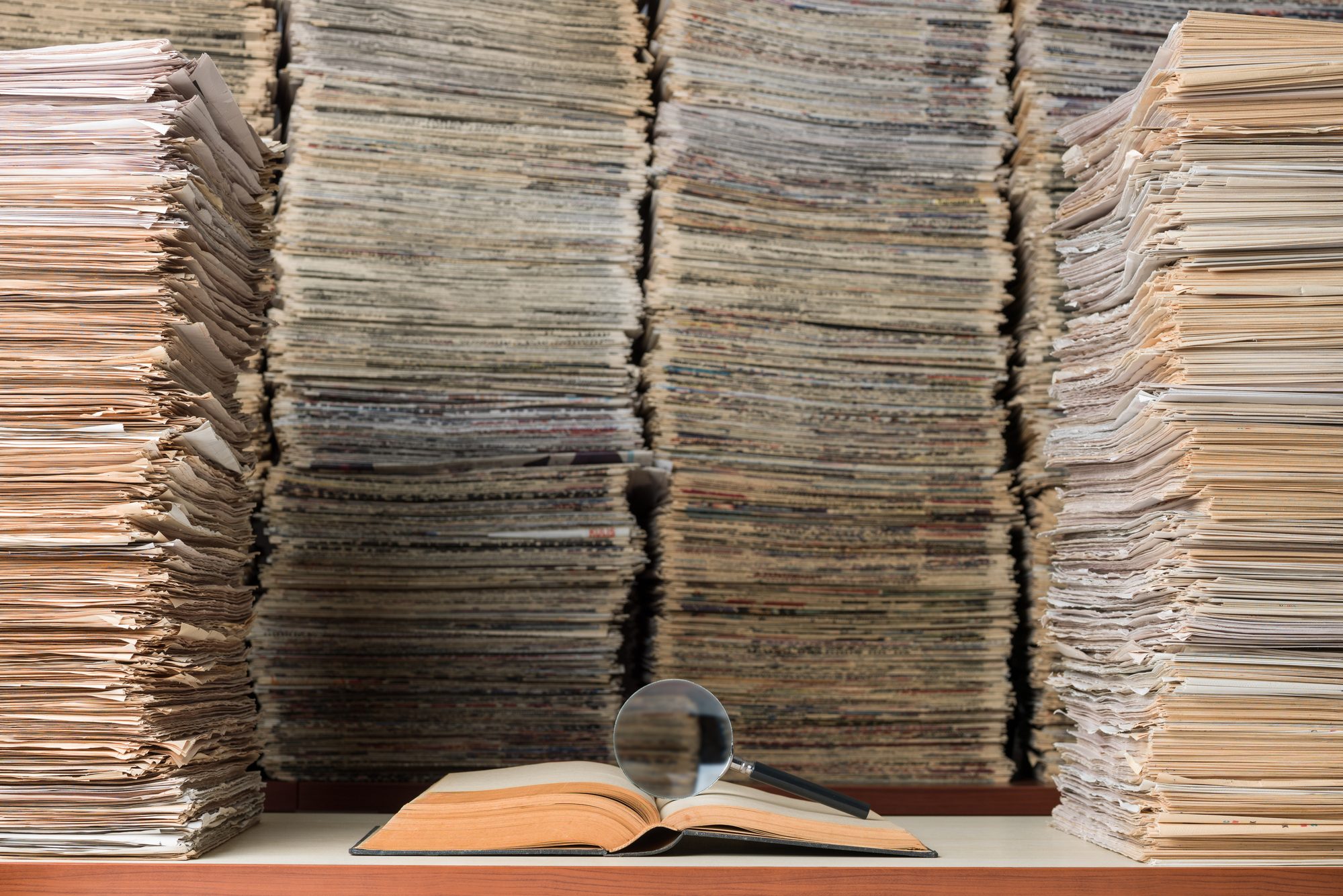

Before any new study, a thorough and exhaustive review of existing literature must be done to make sure the project is novel. Or, to determine whether there is existing data that may be used to answer a their new question.

This is a daunting task, especially considering that millions of new research articles are published each year. Where does one even begin to explore all of that data?

“Each new study contributes more to what we know about a topic, adding nuance and complexity that helps improve our understanding of the natural world. To make sense of this wealth of evidence and get closer to a complete picture of the world, researchers are increasingly turning to systematic review methods as a way to synthesize this information,” says Grames.

Systematic reviews started in the fields of medicine and public health, where keeping current with research can be, quite literally, a question of life or death, says Grames. (Ever wonder how your doctor knows about the latest treatments for your condition?)

“In those fields, there is an established system with Medical Subject Headers where articles get tagged with keywords associated with the work, but ecology does not have that.”

Other fields of research across the scientific spectrum were in the same boat.

The project sprang out of need. In her own process of reviewing, Grames noted she would miss articles and key terms and was interested in finding out how to identify those missing terms. So, Grames decided to create a system that researchers in the field of ecology, environment, conservation biology, evolutionary biology and other sciences, could use.

“As we were working on this software, we realized there was a much faster way to do the reviews than how others were doing them,” says Grames, “The traditional way was mostly going through papers and pulling out a term and then reading the rest of the article to identify more terms to use.”

Even with fairly specific keywords, Grames notes the average systematic review in her field of conservation biology initially yields about 10,000 research papers. While it is important to retrieve relevant information, too much irrelevant information can add unnecessary time.

“Each year, the amount of data just keeps increasing. There are some systematic reviews that if you look at the amount of time they would have taken just three years ago, they would take about 300 days to perform. If the same reviews were done today, they would take about 350 days because the number of publications just keeps going up and up.”

Grames says it took about a month or so to hash out ideas for the software, then she spent a summer writing and fixing the code. The result is an open-source software package called litsearchr.

How it works, says Grames, is that a user will input a search into a few databases.

“The keywords should be fairly relevant entered into the algorithm to extract all of the potential keywords, which are then put into a network. The original keywords are at the center of the network and are the most well-connected.”

Grames says the time required to develop a search strategy has been decreased by 90%.

Presented with the most relevant articles, researchers then have significantly fewer papers to parse through manually. This review stage is partially automated now, too, adds Grames.

Litsearchr is part of a collaborative effort by researchers, called metaverse, where the goal is to link several software packages together so researchers can perform their research from start to finish in the same coding language.

“Researchers can develop their systematic reviews, import data, and there is even a package that can write up the results section for the systematic review,” says Grames.

Grames and her team set up the software so that it could be used by anyone, whether they can code or not, using ready made templates. There is also a detailed step-by-step video to take users through the process.

By keeping the software open source, Grames says debugging and editing is improved because users can point out details that need attention. “Every time I get an email, it is so exciting. It is nice to have it open because people can let me know when there is a typo.”

The software is currently being used by researchers in nutritional science and psychology, and for a massive undertaking screening all papers pertaining to insect populations across the globe.

“There is no way we could do this project without the level of automation we get using litsearchr. I built this out of a need from another project, but this software is making it possible to do even bigger analyses than before.”

Grames is funded by a University of Connecticut Outstanding Scholars Fellowship.