Details are important in science. If even a few details are left out, decisions or research can be completely altered. Whether dealing with explanations for differences in feather colorations in a sparrow, or evidence to be used in making decisions on health care topics, such as whether stockpiling a certain medication is a good idea or not — getting a clear look at the field of evidence on a topic is vital.

How does one go about the task of gathering all of the information without leaving anything out? An international team of researchers gathered together at the Evidence Synthesis Hackathon to figure out the best way to approach the issue through discussions and software development.

In research published this week in Nature Ecology and Evolution, the team suggests a complete overhaul of the current process and describes a framework for coordinating and optimizing the methods of synthesizing as much of the available data as possible on a subject.

For a given subject, there could be published literature going back decades. There may also be a wealth of unpublished or difficult to find information available that may go overlooked and therefore goes to waste.

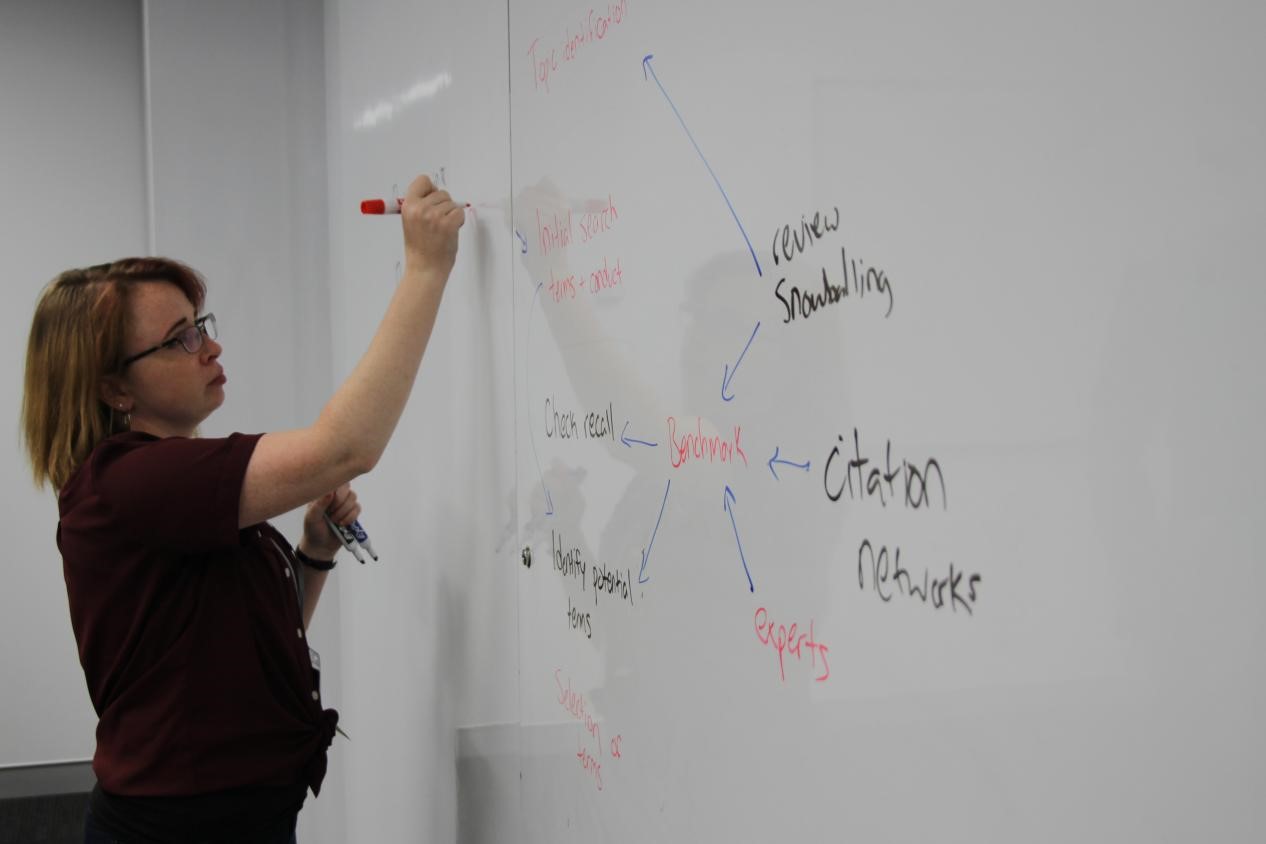

UConn ecology and evolutionary biology PhD candidate Eliza Grames is one of the authors and hackathon participants. Grames explains that research synthesis historically has largely been a two-step undertaking.

“The idea is to find all of the evidence that is out there and sort through it to come to more general conclusions about a topic,” says Grames. “The typical process is to do a really thorough search through the literature and then apply a predetermined set of criteria to the articles that you get back.”

The result of this information gathering could be a qualitative, quantitative, or a narrative synthesis of the data. But if it is only using published data, how accurate is it?

For example, Grames says that when one of the hackathon participants applied a more thorough evidence synthesis to the sparrow feather coloration question mentioned above, the outcome challenged what had been accepted as a ‘classic example of a badge of status’ for the birds. When the updated evidence synthesis was performed, including unpublished data, there was little evidence to support the widely accepted theory.

The team’s approach is to bridge a communication gap that has grown between empirical scientists and the scientists performing the data syntheses. The collaborative open synthesis community approach developed at the hackathon may be the solution needed for the arduous, inefficient, and incomplete evidence synthesis process used currently.

Grames hopes the framework will help others start to embrace this open and transparent community-driven approach to synthesis as a way to improve how evidence is synthesized.

The transparent, open synthesis community component of this work is key says Grames, “Somebody knows what we don’t know, so if we make it completely open and a community of people contribute to the synthesis then we can figure out what we collectively know and what we collectively don’t know.”

See the process and the people involved in the Evidence Synthesis Hackathon in Canberra, Australia that took place in the April of 2019. All photos were taken by Neal Haddaway:

DOI: 10.1038/s41559-020-1153-2

Grames was funded by a UConn Doctoral Student Travel Award and a Travel Bursary from the Evidence Synthesis Hackathon to attend.